Office365 family provides a lot of services for you and your organization. In recent weeks I was playing \ working with some of them like Power BI and MS Flow.

I wrote an article about sharepoint.stackexchange analysis with Power BI. Power BI is a great tool for data analysis. With Power BI Desktop you can create great visualizations of your data and share it with your colleagues or publish on the web. It nicely integrates with modern SharePoint Online as well.

MS Flow provides a way to automate a lot of different processes and simplify business cases. It also a very powerful tool in a different area - process automation. How to combine all of them and build something useful, engaging and interesting? That's the question I came up when I was working with these tools. Well, there are a lot of options available. It all depends on your imagination or concrete business case. I ended up with an interactive feedback analysis system. More...

The year 2018 is over and it's time to perform regular analysis of data at sharepoint.stackexchange. This is the third edition of such analysis.

Tools used to collect and analyze data:

- Power BI with Power BI Desktop - super cool tools for data analysis. If you don't have experience with Power BI, it's worth trying to see what is possible. When I first tried it two years ago I was sooo impressed with power yet simplicity in performing data analysis and building visualizations. It works very well for both simple and advanced scenarios. I believe that everybody will find these tools useful for any kind of data analysis.

- DaxStudio - extremely useful tool to test your DAX queries. I found it recently and it helped me a lot.

- Power BI Community - Power BI has a very strong community. I found a lot of answers at their forum, I even asked some questions and community helped with valid answers. That's not a "tool" but worth mentioning. I am grateful for all the answers.

- Google Maps Geocode API

- Stack Exchange API

- osmosis - nodejs webpages scrapper

The source code used to gather initial data is available at GitHub.

Links

Previous reports:

Disclaimer

All thoughts are mine. Maybe they are not correct or you simply think differently. Please share your thoughts and opinions in comments.

Ok, let's get started! :)

More...

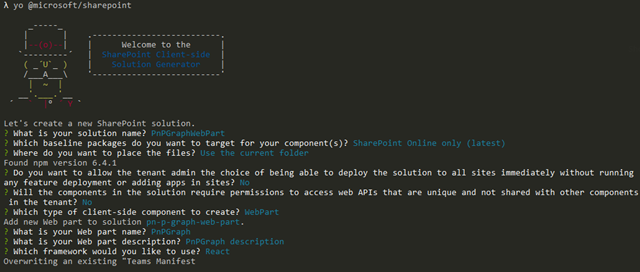

So you want to build an SPFx webpart which uses MS Graph API through PnPjs. Here is step by step guide on how to do that.

A few months ago I wrote a similar post, however, things were changed, some things were simplified a lot, that’s why this is a revisited guide. Also, I’ve addressed some additional steps here. The source code is available on the official SharePoint/sp-dev-fx-webparts GitHub repository.

Prerequisites

- SPFx >= 1.6

- PnPjs >= 1.2.4

1. Scaffold SPFx webpart solution with React

This step is pretty self-explanatory, simply run yo @microsoft/sharepoint, select React, give your webpart a name, do not change other defaults asked by yeoman.

More...

While support of .NET Core for SharePoint CSOM libraries on its own way (still no ETA), you can create a SharePoint add-in with ASP.NET Core 2.1 today. Of course, it adds some inconveniences, but at least you can target the latest version of ASP.NET. I’ve created a sample project at GitHub here so you can easily try it. This post describes how to configure everything to run it.

First of all, a few drawbacks worth mentioning:

- you can’t use F5 experience in Visual Studio for convenient debugging. Instead, you should manually upload your app into a site and attach Visual Studio to running web application.

- you can’t target a project to netcoreapp. Obviously you can’t do it because SharePoint CSOM for .NET Core is not available yet. That’s why you have to retarget your project to .NET Framework instead. Which means that you lose cross platform feature. If that’s essential thing for you, then you should wait for official .NET Core support.

- solution described here only works for SharePoint Online. On-premises is a completely different story and out of the scope of the post

Let’s get started. More...

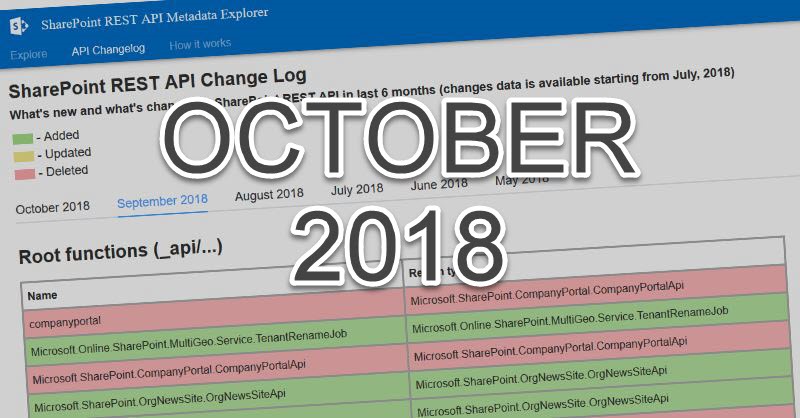

It’s November and it’s time to review changes in SharePoint REST API in October with help of SharePoint RESE API Explorer. As usual I have to put below caution:

Please note, that all changes are gathered from Targeted tenant. Most likely this changes haven’t been officially introduced yet, use this post as spoilers to potential upcoming features. If you want to use APIs mentioned here, please check corresponding official documentation to make sure they are available.

With REST API explorer you can navigate between different endpoints, explore their structure, methods, classes and parameters. REST API explorer uses _api/$metadata endpoint to get the REST API data, parses it and presents in tree view format. REST API explorer also stores historical $metadata results in Azure storage making it possible to compare $metadata results we have today and month ago. More...

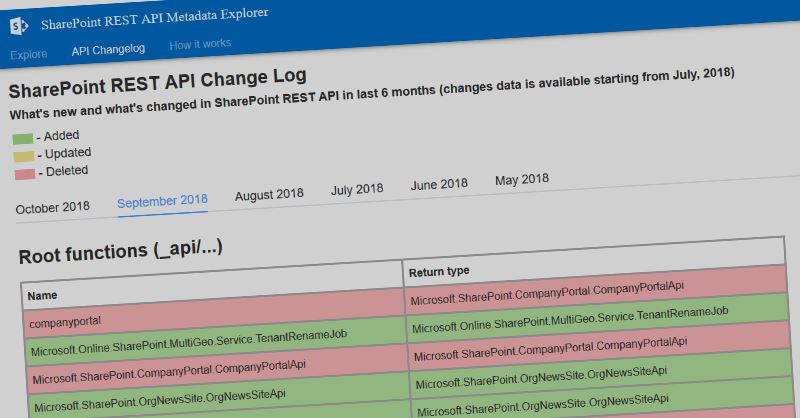

A few months ago I’ve created a site, where you can explore SharePoint REST API - SharePoint REST API Metadata Explorer. With REST API explorer you can navigate between different endpoints, explore their structure, methods, classes and parameters. REST API explorer uses _api/$metadata endpoint to get the REST API data, parses it and presents in tree view format. REST API explorer also stores historical $metadata results in Azure storage making it possible to compare $metadata results we have today and month ago. I’ve added a new section to REST API explorer called SharePoint REST API Change Log. With change log you can explore what was changed in SharePoint APIs in the last few months. More...

A few times ago I wrote a post on how to run PnP-PowerShell in your Azure DevOps build. Described method is a bit inconvenient (hello @waldekm :)), because you have to setup a code, which automatically installs PnP-PowerShell module. You should repeat it for every PowerShell script step. What if I want just put my PnP-PowerShell code in file, run it and that's it?

To make things easier, I've created a custom build\release step for Visual Studio Team Services (now Azure DevOps) called (guess how) - PnP-PowerShell. This step significantly simplifies the way you run PnP-PowerShell commands in Azure DevOps. More...

- Classic pages you said?

- Yes! You read it right. MS Graph API from classic SharePoint page. However please read it first:

That’s not an official or recommended way. That’s just a proof of concept, which uses some tenant features introduced with SPFx 1.6. That’s something I decided to try out when SPFx 1.6 was out. Use it on your own risk.

When to use it? On classic pages if you don’t have an option to execute SPFx code.

So what if you want to call some MS Graph APIs from your classic SharePoint page? No problem then.

Before doing actual coding, we should check that we meet all prerequisites:

- You have SPFx 1.6 features, which work without issues in your tenant. You can test it by creating a simple SPFx web part, which uses MS Graph. Upload it to the app catalog, approve the request to MS Graph and see it actually returns MS Graph data

If above works, you have everything needed for our experiments. More...

SPFx 1.6 was released recently and a lot of new and interesting features were introduced. AadTokenProvider, AadHttpClient, MSGraphClient went to GA, which are my favorite features. One of the common thing in SPFx development is accessing other resources, protected with Azure AD. For example you might have your LOB API with Azure AD protection and you want to consume that API from SPFx web part (extension). Before SPFx 1.6 it was a bit challenging, because you have to deal with cookies attached to your asynchronous http request or with custom “patched” adal.js implementation. SPFx 1.6 features mentioned earlier drastically simplify the task to access Azure AD protected resources. Now you can access Azure AD APIs (including Microsoft APIs like MS Graph) from SPFx with ease!

I’m pretty sure you know about PnPjs library. It has a lot of cool features, among them a fluent interface to SharePoint and Graph API. WIth SPFx 1.6 release you can use PnPjs as your Graph client without hassle. Read further to find out how. More...